Events

13.3.2026

Miikka Kataja

Your performance cycle is taking 26 weeks a year. Here's what two people leaders did instead.

Faculty's performance process consumed 26 weeks a year. Juro burned theirs down and rebuilt. Here's what both learned about AI, manager coaching, and continuous feedback.

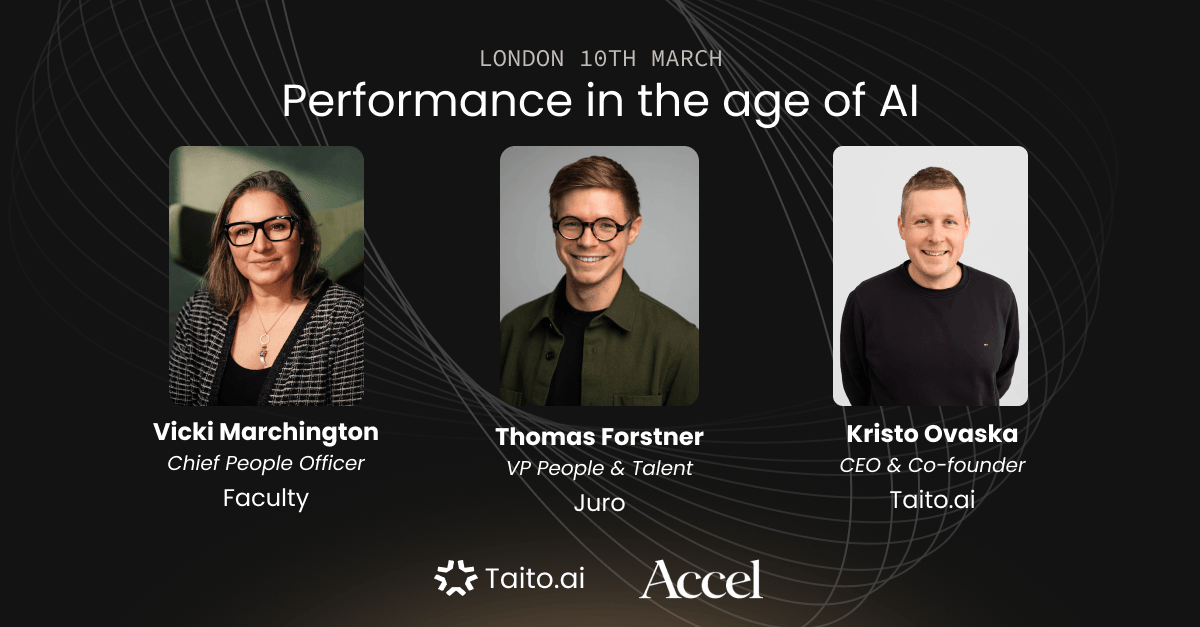

Insights from Vicki Marchington, Chief People Officer at Faculty, and Thomas Forstner, VP of People at Juro — shared at a Taito event in London, March 2026.

TL;DR

- Faculty's performance process was consuming 26 weeks of the year across two annual cycles. The goal was simple: flatten the curve of activity.

- Juro burned down nine years of accumulated process nine months ago and rebuilt from scratch — now running lightweight OIL checks every two months with 100% manager completion.

- AI's biggest unlock in performance is not automation. It's removing the cognitive load that was blocking managers from giving feedback in the first place.

- A performance system can never satisfy the exec, the manager, and the reviewee simultaneously. You have to pick which problem you're solving.

- "Business strategy trumps AI strategy." Start with the problem you're trying to solve — not the tool you want to use.

Who was in the conversation and why did it matter?

The discussion brought together two people leaders who have taken very different paths to the same destination: a performance system that actually gets used.

Vicki Marchington is Chief People Officer at Faculty, an AI consultancy of around 500 people — recently acquired by Accenture — that has spent three years dismantling a performance process that was heavy, infrequent, and culturally embedded enough that changing it required deliberate effort at every level.

Thomas Forstner is VP of People, Talent and Work Tech at Juro, a legal tech company of around 80 people. He joined when the company was 15. He implemented the classic playbook — six-monthly reviews, leveling framework, separate career conversations — iterated on it for four years, and then nine months ago, largely burned it down.

Kristo Ovaska, Taito's CEO, hosted the conversation as part of Taito's ongoing series of events on performance in the age of AI, held in London in March 2026.

What made this conversation valuable was the contrast. Faculty is a larger, older, AI-native company that couldn't afford to start over. Juro had the freedom to rebuild. Both arrived at similar conclusions about what performance systems need to do — and what they cannot do for you.

What was wrong with the old approach?

At Faculty, the answer was simple: too much, too infrequently.

"It was taking about 26 weeks of the year to get through those two cycles. There were about 70 different calibrations. It was just an unbelievable amount of work and time and effort."

The goal Vicki set was not to build a better annual review. It was to flatten the curve entirely — replace the massive spikes of activity with something more continuous, lighter, and embedded in how managers already worked.

"All the things that HR people would tell you not to do — it was all being saved up for this big event where everyone got all their feedback in one go. That felt fundamentally wrong."

At Juro, Thomas's reckoning came from a different direction. He describes running what he calls a "fairly cookie cutter" process for most of his first four years: six-monthly reviews, a leveling matrix, separate career and performance conversations. It was the kind of system that looks right on paper and works fine until the company is moving fast enough that six months is simply too long to wait.

"The basic hypothesis was: if we're moving at AI company speed, a six-monthly performance process is way too slow. You need something more continuous. This idea has been around for ages. But until AI came along, it was genuinely hard to implement."

So nine months ago, Thomas and a member of his team effectively burned it down. Almost nothing from the old process survives. What replaced it is simpler, more frequent, and more data-driven — and AI made the change possible in a way it wasn't before.

How does Juro run performance now?

The core of what Thomas built is a lightweight check-in every two months, structured around the OILS framework — Observation, Impact, Listen, Suggestion.

Every manager gives every direct report a structured piece of feedback. It takes as little as five minutes. The completion rate, after nine months of iteration, is 100%.

The AI unlock that made this work was not the feedback itself. It was removing the cognitive load that had always made feedback hard.

"I can't remember what I had for breakfast yesterday. Why would I expect a manager — including myself — to combine six months of someone's work into one concise performance summary? That was never a reasonable ask."

The solution: a scheduled task sends every manager a performance dossier two weeks before each check-in, pulling from Notion, meeting notes, and other connectors. The dossier surfaces relevant context and suggests focus areas. The manager's job is to have the conversation, not to remember everything that happened.

Thomas built a small suite of custom AI tools — he calls them the "family of Smiths" — to support this. OILsmith turns an unstructured brain dump into a structured OILS-format feedback note. FutureSmith helps employees build a portfolio of evidence for their own promotion case over time.

"You gotta give something in return. You gotta give them a carrot. The AI tool was the carrot — and it opened up an actual learning cycle with managers."

The data from those bimonthly check-ins now feeds into monthly business reviews at the exec level, where Thomas correlates performance scores with business metrics like shipping velocity, EBITDA, and net dollar retention. This, he argues, is more useful than traditional calibration.

What has Faculty learned about AI and performance enablement?

Faculty's journey is further along in some ways and more constrained in others. As an AI company working with government and defence clients — sectors where caution is built into the culture — internal AI adoption moved slower than the external work the company was doing for clients.

The tools that have worked best are the ones that remove friction for managers while keeping the human judgment where it belongs.

"We needed something that operated where people were. Operating in Slack. Frictionless for managers."

Using Taito, Faculty has significantly reduced the number of meetings required to run its performance process. Managers now have access to coaching prompts during feedback, AI-generated goal suggestions, and summarised views of feedback data that make continuous conversations easier to have without reading everything.

The outstanding challenge is cultural, not technical.

"A system doesn't ultimately change anything in your culture. People are still wedded to this way of operating where they save feedback up. We solved the time problem. We haven't yet solved the habit problem."

Vicki's next priority is joining the performance system to Faculty's new AI-powered learning platform — so that when a goal or development area emerges from a feedback conversation, there's a direct path to a relevant resource, project, or learning opportunity. The feedback output feeds directly into a development pathway rather than sitting in a completed form.

What can't AI do in performance management?

Both Vicki and Thomas were direct about where AI reaches its limits.

"You can't automate away the stuff that requires coaching managers. You only want to automate what's repetitive — then use the time you've saved to coach managers to do things they've often never learned."

Thomas described a manager on his team who had always been "too nice" in her feedback — not from bad intent, but from genuine discomfort with difficult conversations. It took a real moment of consequence — a situation where the absence of documented feedback created a legal and operational problem — to shift her approach.

"That was the aha moment. Just by way of failure. You can't replace that with any tool."

The pattern both leaders described is consistent: AI earns its place by handling the repetitive work that drains manager energy and attention. The conversation itself — the coaching, the judgment, the relationship — remains human. What changes is that managers arrive at those conversations better prepared, with less to remember and more to say.

There is also a strategic point worth naming. Both Vicki and Thomas described seeing companies reach for AI tools before they had a clear problem to solve.

"Business strategy trumps AI strategy. What is the outcome you're after? Find the places where AI can help — rather than starting with the tool and working backwards."

What is the deeper takeaway for people leaders?

Performance in the age of AI is not primarily an AI problem. It is still a design problem.

Both Vicki and Thomas approached their performance systems the way a product manager would approach a product: start with a hypothesis, test it, take feedback, iterate or burn it down. The technology made it easier to run lighter processes at higher frequency. It did not make the design decisions for them.

What AI changes is the feasibility calculation. Continuous performance management has been the right answer for at least a decade. The reason it failed in most organisations was execution cost — the cognitive load on managers, the friction of structured feedback, the time required to keep the system alive. AI has materially reduced all three.

The organisations moving fastest on this are not the ones with the most sophisticated AI stack. They are the ones who were clearest about what problem they needed to solve.

What should you read next?

- How Lovable and ŌURA scale performance in the AI era

- What does performance look like at Linear in the age of AI?

- How can AI help you build continuous feedback loops in your organization?

FAQ

1. What is the OILS feedback framework?

OILS stands for Observation, Impact, Listen, Suggestion. It is a structured feedback format that helps managers give specific, actionable feedback rather than vague impressions. Thomas at Juro uses it as the basis for all bimonthly check-ins.

2. How often should performance check-ins happen?

Juro runs them every two months. Faculty is moving toward a similar cadence. Both found that the frequency matters less than the consistency — predictable, lightweight conversations outperform infrequent, heavy ones.

3. Does continuous performance management replace annual reviews?

Not necessarily. Thomas kept the leveling framework while removing everything else. Vicki still runs cycles but has made them lighter. The goal is to reduce the volume of work that accumulates in a single event, not to eliminate all formal moments.

4. How do you handle calibration without a calibration meeting?

Thomas calibrates against business metrics — correlating performance scores with shipping velocity, EBITDA, and net dollar retention. When scores diverge from business results, that becomes the conversation. Vicki uses Taito to pull ratings and business data together ahead of calibration meetings to focus the discussion on outliers.

5. What is the role of AI in reducing manager cognitive load?

AI surfaces relevant context — meeting notes, recent work, previous feedback — so managers arrive at check-ins prepared rather than relying on memory. It also helps structure raw feedback into consistent formats. The goal is not to generate the feedback, but to remove the preparation work that was making feedback feel like an enormous task.

If you are rebuilding your performance process or thinking about introducing AI into how your team gives feedback, we can walk you through how Faculty, Juro, and other teams have approached this. See how it works.